|

Examples include IBM TrueNorth Neurosynaptic System ( Merolla et al., 2014), NeuroFlow ( Cheung et al., 2016), Neurogrid ( Benjamin et al., 2014), SpiNNaker ( Furber et al., 2014), and the BrainScaleS project ( Schemmel et al., 2008), all of which are implemented using Si CMOS. Recent advances in neuromorphic engineering ( Schuman et al., 2017) have motivated the development of neural hardware substrates that are tailored to loosely emulate computations that happen in a human brain with extremely low power and efficiency. Experiments with optical flow demonstrate the energy benefits of deploying a reduced-precision and energy-efficient generalized matrix inverse engine on the IBM TrueNorth platform, reflecting 10× to 100× improvement over FPGA and ARM core baselines. The analytical model is empirically validated on the IBM TrueNorth platform, and results show that the guarantees provided by the framework for range and precision hold under experimental conditions. The paper derives techniques for normalizing inputs and properly quantizing synaptic weights originating from arbitrary systems of linear equations, so that solvers for those systems can be implemented in a provably correct manner on hardware-constrained neural substrates. This paper discusses these challenges and proposes a rigorous mathematical framework for reasoning about range and precision on such substrates. However, deploying numerical algorithms on hardware platforms that severely limit the range and precision of representation for numeric quantities can be quite challenging. For example, a recurrent Hopfield neural network can be used to find the Moore-Penrose generalized inverse of a matrix, thus enabling a broad class of linear optimizations to be solved efficiently, at low energy cost.

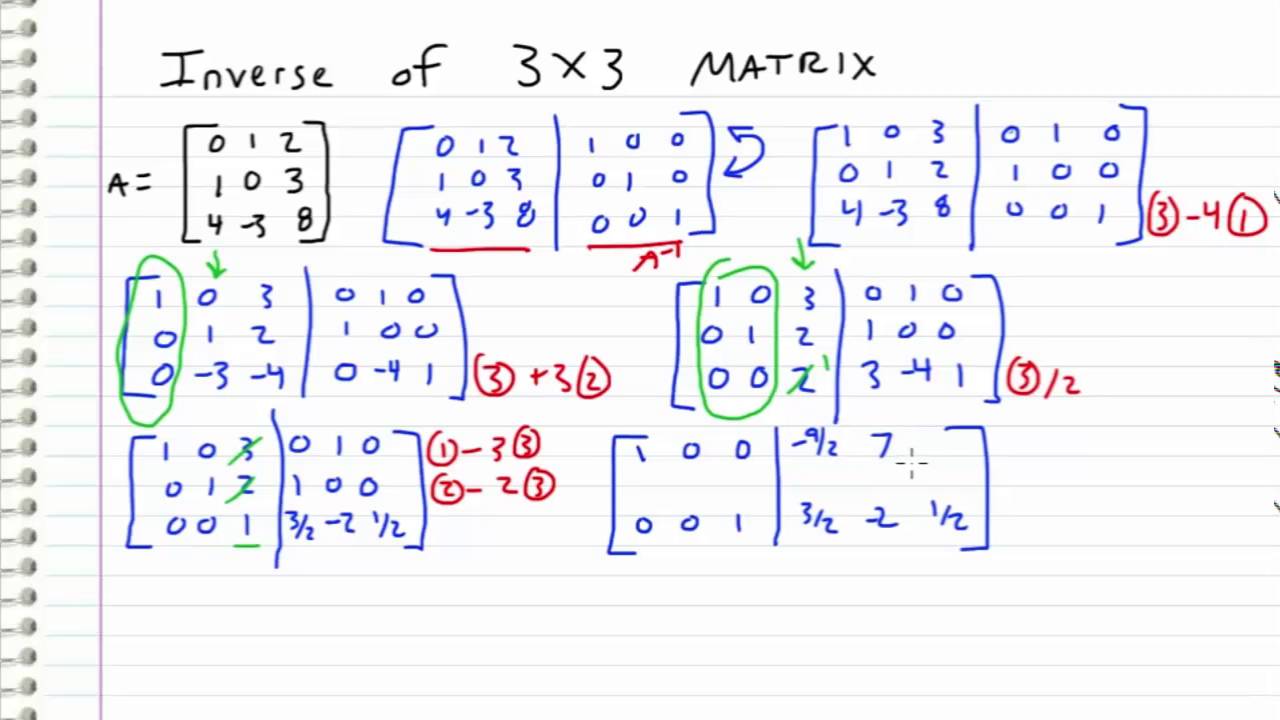

3Department of Computer Sciences, University of Wisconsin-Madison, Madison, WI, United StatesĮmerging neural hardware substrates, such as IBM's TrueNorth Neurosynaptic System, can provide an appealing platform for deploying numerical algorithms.2Department of Electrical and Computer Engineering, Georgia Institute of Technology, Atlanta, GA, United States.1Department of Electrical and Computer Engineering, University of Wisconsin-Madison, Madison, WI, United States.The adjugate matrix is noted as Adj(M).Rohit Shukla 1 *, Soroosh Khoram 1, Erik Jorgensen 2, Jing Li 1, Mikko Lipasti 1 and Stephen Wright 3 This is sometimes referred to as the adjoint matrix. The final result of this step is called the adjugate matrix of the original.They are indicators of keeping (+) or reversing (-) whatever sign the number originally had. Note that the (+) or (-) signs in the checkerboard diagram do not suggest that the final term should be positive or negative. Continue on with the rest of the matrix in this fashion.

The third element keeps its original sign.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed